The Problem

Every Java microservice carries a heavy coat of infrastructure: web servers, serialization, DI containers, service discovery, config management, metrics, retry logic, circuit breakers.

Your pom.xml doesn't distinguish business dependencies from infrastructure dependencies. They compile together, deploy together, and break together. A security patch in a web server library requires rebuilding every service.

DI frameworks fight with service meshes for routing control. Cloud SDK retry logic conflicts with application-level resilience libraries. The conflicts surface as bugs in production — not during development.

The Architecture

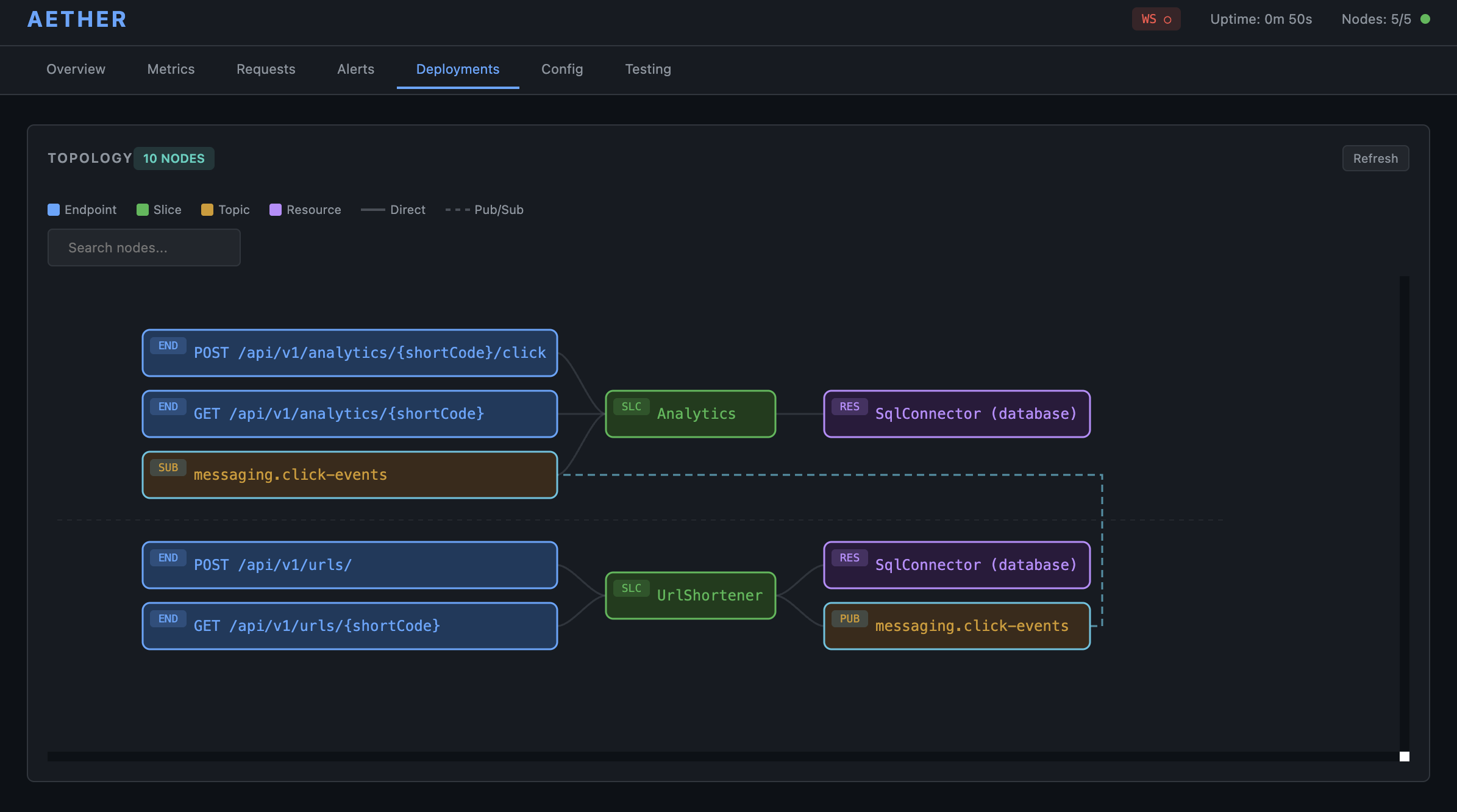

Separate the layers. Let the runtime manage infrastructure — resource provisioning, scaling, transport, discovery, retries, circuit breakers, configuration, observability, security. None of these are application concerns.

Update the runtime — roll it out across nodes without touching applications. Update business logic — deploy new versions without touching infrastructure. Each independently, each without downtime.

When layers don't share a deployment unit, they don't share a deployment schedule.